To me, the conversation about AI often starts in the wrong place. It frequently pops up with questions like: Will it replace jobs? Will it outperform humans? Will it make certain roles obsolete?

Although these questions are understandable, most headlines frame AI as either a miracle or a threat. As a result, organisations feel a growing pressure to respond quickly. But in the organisations I work with, AI does not immediately start to replace people or anything like that. It does, however, do something quite unexpectedly. It brings the organisation’s leadership to light in a surprising way.

Let me elaborate. When AI enters an organisation, it does not simply automate tasks. It magnifies decision-making patterns. It accelerates whatever clarity or confusion already exists. It amplifies trust where trust is present, and it intensifies hesitation where trust is fragile. AI becomes less of a technological disruption and more of a diagnostic instrument. In other words, if you do not have your processes in order, this will be exposed by the installation of AI tooling. And that is why this moment matters. Not because AI is taking over, but because it is holding up a mirror.

This newsletter is about that mirror. It explores what AI reveals about leadership maturity, decision clarity, and organisational design. Don’t worry, it is not a technical piece, nor is it a warning about automation. It is an invitation to look at AI as a leadership test. Because in my experience, the organisations that struggle most with AI are rarely lacking tools. They are lacking alignment, trust, and distributed authority.

AI as a mirror, not a machine

The easiest way to misunderstand AI is to treat it as a tool that sits outside the organisation. Something that can be implemented, governed, or scaled like any other system upgrade.

In reality, AI integrates itself into the bloodstream of how work gets done in your organisation. It reshapes how decisions are made, how information flows, and how accountability is experienced. It changes the rhythm of work, often without formally announcing that shift.

This is where the mirror effect begins.

If decision rights are unclear before AI is introduced, AI will not solve that. If anything, I have seen it making the lack of clarity painfully visible. Teams start asking questions that may have been ignored for years: Who owns this output? Who approves this insight? Who is accountable if the recommendation is wrong? What was previously tolerated as an unspoken agreement suddenly becomes an operational risk.

I have seen leadership teams surprised by this. They assumed AI would make things faster. Instead, it made structural vagueness impossible to ignore. If trust between leaders and teams is already fragile, AI adoption makes it visible. Leaders who feel insecure about losing control may override AI suggestions without hesitation. Teams who feel watched may interpret AI tools as surveillance rather than support. What was once a cultural undercurrent becomes explicit pressure.

And if leadership has not invested in clarity of purpose and direction, AI will only increase the noise. The speed of output might grow, but the quality of decisions does not necessarily improve. To some surprise, instead of improving focus, the organisation may create quicker confusion.

AI, in this sense, is not neutral. It amplifies the maturity of the system into which it is introduced. Where leadership conditions are strong, AI enhances capacity. Where they are weak, AI exposes fault lines.

The mirror does not lie or judge. It simply reflects.

It is not AI vs. humans. It is AI and humans

Much of the public narrative around AI is framed as a competition: AI versus humans. Efficiency versus empathy. Automation versus meaning. This black-and-white thinking may be dramatic, but it is deeply misleading.

The real shift is not replacement. It is integration. AI can expand cognitive capacity significantly. It processes data at scale, spots patterns fast, and creates options quicker than most people or teams can on their own. And it accelerates exploration and reduces certain types of cognitive load. That is a really powerful addition to your workforce.

On the other side, there are us human beings who bring context. Things like ethical judgement, lived experience, and relational intelligence. We can interpret nuance in ways machines cannot replicate. At least for now. We understand consequences beyond data. We sense tension in a room. We weigh long-term trust against short-term gain. And we make decisions that are not purely logical, but relational and contextual.

The future of work is not about deciding which of these is superior. It is about learning how to orchestrate them intelligently. This is where leadership becomes central. Leaders are no longer simply directing human effort. Leadership is shaping the interaction between human judgement and system intelligence. It is designing the conditions under which AI operates, but does not dictate. They are explaining when a decision relies on data and when it needs human judgement.

In my work, I increasingly see this as a blending of intelligences. Personal judgement, emotional intelligence, collective intelligence, and now system intelligence. The role of leadership is not to defend one against the other, but to integrate them responsibly.

When organisations frame AI as a replacement story, fear increases. People will start to defend their territory. As a result, leaders will tighten their control and adoption slows or becomes superficial. When organisations frame AI as organisational improvement, something different happens. People will start to experiment and leaders become learners as well. And with this, human capability and system capability evolve together.

So, the question is less about whether AI will outperform humans in specific tasks. It’s about whether leadership can create a system where humans and AI support each other, not compete for power. And that is a leadership question, not a technology question.

What AI reveals about leadership capacity

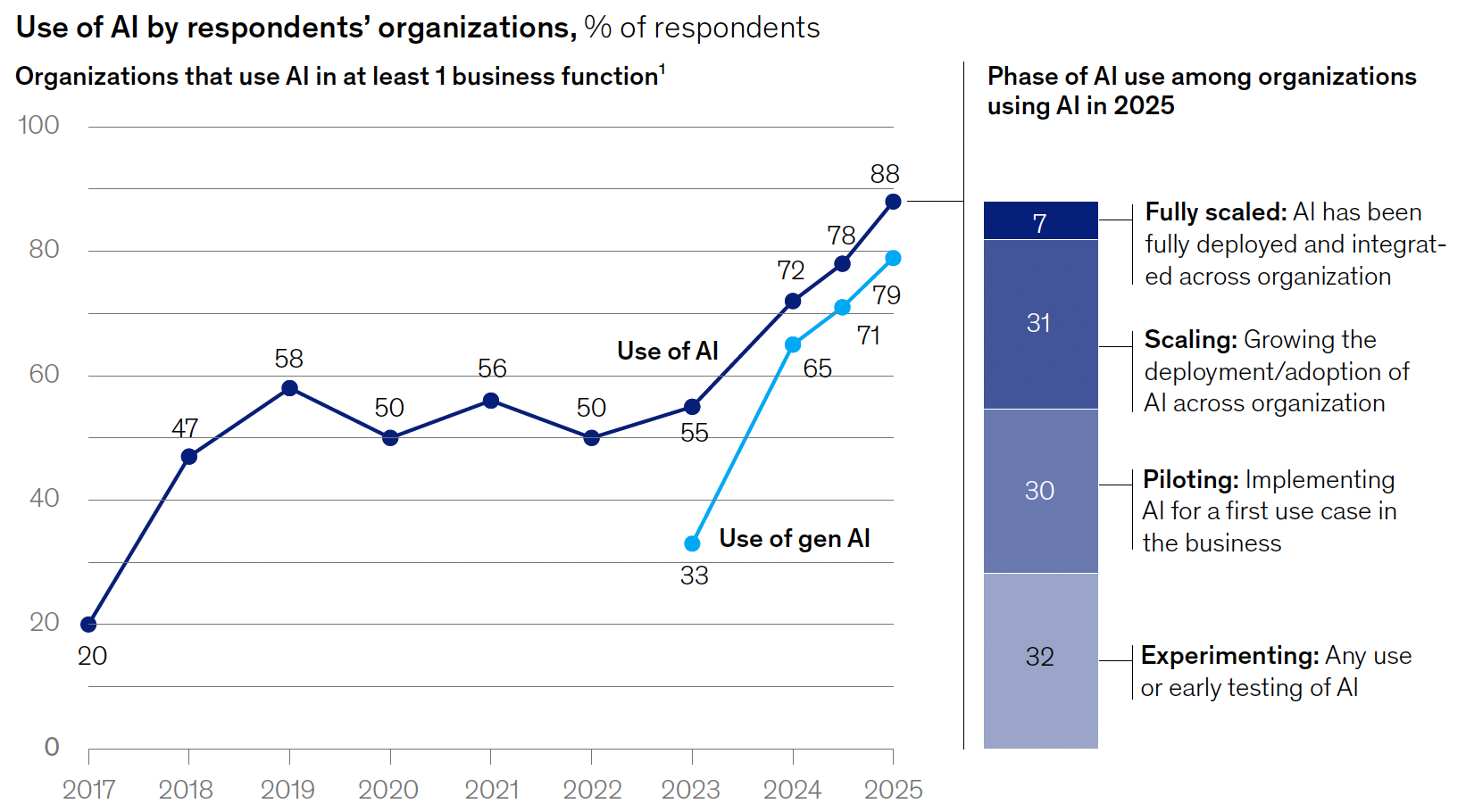

There is some recent research on AI adoption, like McKinsey & Company, The State of AI, 2025, that highlights an interesting paradox. It indicated that individuals who use AI tools on a more frequent basis often report higher productivity. While at the same time, more AI use can lead to a feeling of being less connected to the organisation and even to the extend that employees want to leave.

This is not because AI inherently reduces engagement. It is because efficiency alone does not sustain meaning. AI adoption reveals whether leadership has built the human infrastructure to absorb acceleration. When roles shift from doing to reviewing and from creating to supervising, leaders need to help people feel a sense of ownership and purpose. Supervision without authority can quickly turn into disengagement.

Therefore, I often ask leaders: if AI now performs part of the cognitive work, what remains uniquely human in this role? I’ve seen that unclear answers to these kinds of questions often lead to disengagement.

AI also reveals how comfortable leaders are with distributed intelligence. When algorithms show insights that go against what leaders expect, they face a choice. They can trust the system, override it, or question it. That moment exposes their relationship to authority and uncertainty. If leaders lack clarity, they hesitate. If they lack confidence, they start to centralise decisions. And if they lack trust in their teams, they pull control back to themselves.

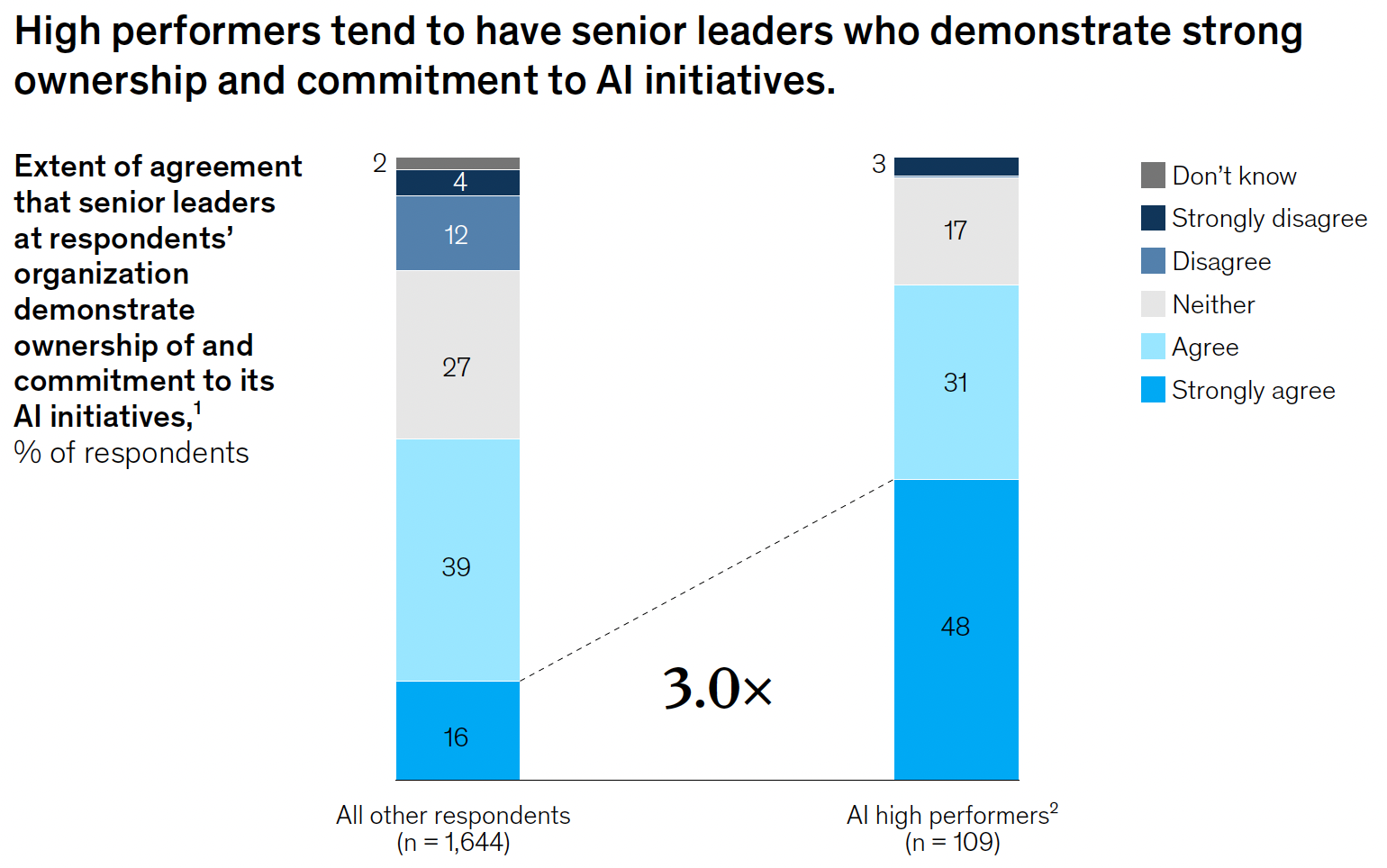

On the flip side, when leadership capacity is strong, AI adoption becomes smoother. These leaders, for example, set clear boundaries. They define when human judgment supersedes automation. They inspire curiosity rather than defensiveness when AI systems produce unexpected outputs. In this way, AI does not simply test technical readiness but it also tests emotional and relational maturity.

Where organisations often go wrong

Despite good intentions, many organisations make predictable missteps when integrating AI.

First, organisations treat AI as a technology project rather than a leadership shift. Responsibility often sits in IT or innovation departments, while leaders assume their role remains unchanged. But AI reshapes how organisations deal with authority, accountability, and decision rhythm. Without leadership alignment, implementation remains quite superficial.

Second, they centralise control under the cover of risk management. In an attempt to prevent errors, leaders restrict access and tighten approval pathways. They often don’t realise that this slows learning and weakens the adaptability that AI is meant to provide. Fear of misuse becomes more influential than the ambition for growth.

Third, they want to optimise efficiency without protecting human energy. We’ve all seen certain metrics improve, output increase, or dashboards looking more promising. Yet slowly but surely, people start to experience cognitive overload. The speed of information grows, but reflection time shrinks at the same time. Without proper or deliberate attention, burnouts will accelerate rather than diminish.

I have seen organisations celebrate AI dashboards while ignoring rising frustration in teams. And I assure you, over time that gap becomes costly. Mind you, these missteps are not evidence of bad leadership. They are signals that leadership has just not yet adjusted to a blended reality.

What leaders have to do differently

If AI really reveals flaws in leadership, leaders should respond thoughtfully, not defensively. When technology speeds up, quite a few leaders have a natural urge to tighten control. Unfortunately, this often means slowing down decisions or adding more layers of approval to manage risks. Yet those reactions usually increase the very friction AI is meant to reduce. Leaders should see AI not as a threat but as a sign. It shows that their decision-making, clarity, and trust have to improve. And that requires conscious design, not reactive adjustment.

To lead well in this moment, three key things determine whether AI strengthens or destabilises your leadership:

- Clarify decision architecture before scaling AI. Define clearly which decisions are automated, which are advisory, and which remain fully human. Being vague or unclear about this creates friction later.

- Redesign meeting rhythms to incorporate AI-informed inputs. Instead of piling AI outputs onto busy agendas, focus discussions on interpretation and judgement. Shift from reporting to sense-making.

- Invest in human capability alongside technical rollout. Training should not only teach how to use tools, but also how to think with them. Encourage leaders and teams to explore limitations as openly as possibilities.

- Create and protect experimentation zones. Establish safe spaces where AI can be tested without immediate performance pressure. Learning comes to life in environments where mistakes are treated as information rather than failure.

- Try to embody humility. Leaders who publicly learn about AI tools signal that adaptation is shared and not delegated. When leaders show curiosity rather than authority, the rest of the organisation follows.

These actions may appear operational, but they are deeply cultural. They signal whether AI integration is about control or about capability. And over time, they shape whether AI becomes a source of fragmentation or coherence in your organisation.

The future is blended

We all know that AI will continue to advance, systems will grow more sophisticated, and automation will expand. None of that is surprising. Success will depend not on how fast organisations adopt tools, but on how well their leadership matures in using them.

AI does not replace leaders. It exposes whether leadership has built clarity, trust, and distributed authority. It reveals whether human capability has been supported or neglected. It shows whether the organisation can integrate speed with judgement, efficiency with meaning.

The future does not belong to AI-driven organisations. It belongs to organisations where humans and systems move intelligently together. And that future will not be determined by technology alone. It will be shaped by leadership.

If you are working through this shift as well and want to explore what leadership in an AI-augmented organisation truly requires, you can find more about our approach at twinxter.com. And that, ultimately, is where leadership proves its value.