I’ve noticed that the conversation about AI is somewhat changing, and mind you, it’s not just because these tools are getting better by the day. What is changing more fundamentally is the role AI is beginning to play inside the flow of work. For a while, most organisations treated AI as support. It helped people draft, summarise, analyse, or automate isolated tasks. In such a model, responsibility still felt straightforward. A person used the tool, interpreted the output, and remained visibly accountable for the final decision. It seems as clear as day. But that boundary is becoming less clear.

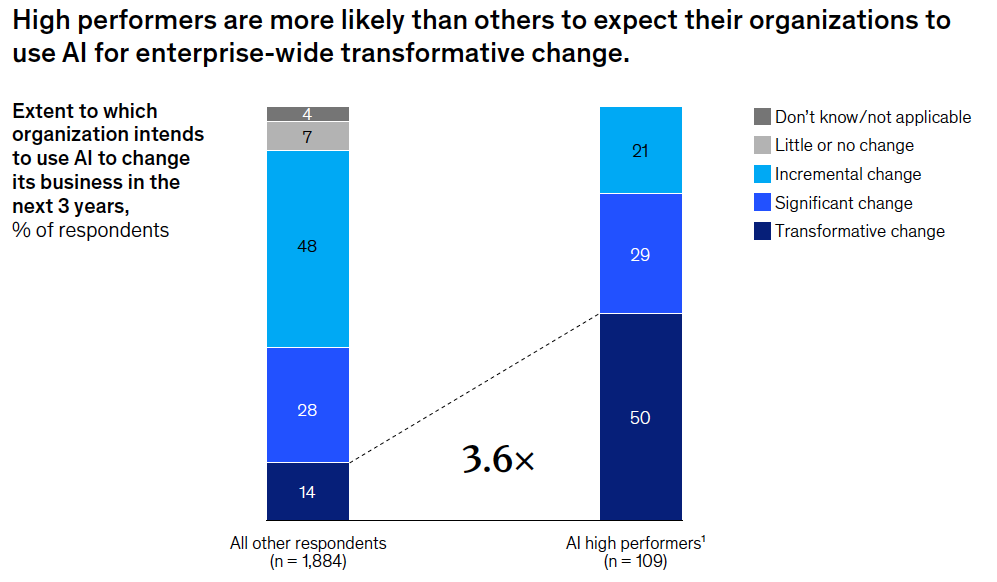

As AI becomes embedded into workflows, systems, and operational routines, it is moving closer to the action. It can now route requests, flag exceptions, and prioritise cases. It also recommends next steps and can trigger actions within set guardrails in certain contexts. Research on agentic systems (McKinsey November 2025) shows that organisations are now designing work to harness the strengths of people, agents, and robots. So, the key question isn’t just what AI can do. It’s also about how we share tasks between human judgement and machine-led action.

That is why this is not another newsletter about the AI hype, nor about whether AI will replace people. It is about something more pressing and structural. As AI gains autonomy inside the organisation, accountability cannot be allowed to slip away into the background. Instead, it has to become more transparent. If that does not happen, organisations may gain speed while losing clarity, trust, and ownership at the same time.

When AI moves closer to action

One of the easiest ways to underestimate this moment is to think of AI as just another productivity layer. That view is already too limited and outdated. The real shift is not simply that AI can do more. It is that AI is being woven into the mechanisms around how work moves through your organisation.

And I assure you that this matters. Once AI begins to influence flow instead of just output, it alters how we think about responsibility. A certain case may be prioritised by an AI model before a manager sees it. A recommendation may shape a hiring decision before a recruiter has fully questioned it. Or an employee issue may be escalated, routed, or categorised automatically in an unintended direction. Such actions affect people, teams, performance, and of course risk. But the visible hand behind these actions becomes much less obvious.

The McKinsey report makes this point quite clear. As technology takes on more tasks, human judgment and oversight do not become less important. On the contrary, they become more vital to keeping organisations on course. It also notes that organisations will need to redesign workflows and performance measures. This to reflect the combined contributions of people and intelligent machines.

That is where the title of this newsletter becomes relevant as well. If AI changes what is possible, then autonomy changes who or what appears to be performing. Accountability determines whether the organisation can clearly state who is responsible for the outcome.

Why autonomy feels attractive and why that is not enough

It is easy to understand why organisations are drawn to autonomy. I practise this with my clients on a daily basis as well. It offers less friction, fewer repetitive steps, quicker decisions, and better scale. And that’s a very positive route to take. When we also start to take AI models into account, then this changes around a bit. The newest operating models around AI are designed to create smoother flow across complex systems. Looking at the latest AI trends, then they indicate that businesses are shifting towards more organised environments. This means that agents, robots, and humans all work together. They are then supported and guided by clear governance, real-time visibility, and built-in oversight.

The attraction often makes organisations focus on capability first. They think about consequences later. They first ask what can be automated, sped up, or delegated. Only then do they ask questions like: what happens to accountability once those actions are no longer fully initiated by a person?

Autonomy is scaling, and so is the need for control. By 2028, 70% of organisations deploying multi-agent and multi-LLM systems are expected to use centralised coordination.

That is an important question to ask because autonomy is not merely a technical feature. It is an organisational condition. The more a system can act, the more careful the organisation must be about decision rights, escalation paths, oversight, and review. If those conditions are weak, autonomy does not create maturity. It amplifies the lack of clarity.

This is where many organisations unintentionally create risk. They do not lose accountability all at once. They lose it through a gradual softening of ownership along the way. Language starts to shift. The system flagged it. The tool routed it. The workflow triggered it. The model recommended it. Each phrase might be correct on its own, but together they can create a gap between action and accountability. The work moves, yet no one feels fully responsible for standing behind it.

The accountability gap is where trust starts to decline

This is why accountability has to become more precise as autonomy increases. A key finding in AI predictions is that ethical AI isn’t just about bias, abstract ideas, or technical accuracy anymore. It’s increasingly more about power and accountability. Who controls the AI? Who’s responsible for what it does? And whose interests does it really serve?

That change is important because it brings the conversation back to the day-to-day reality within organisations. People do not experience AI as a philosophical debate. They experience it through decisions, recommendations, workflow changes, and whether they still know where responsibility lies.

This becomes especially sensitive in areas that affect things like careers, opportunity, workload, and fairness. If an AI-enabled performance tool flags someone, or if a hiring tool ranks one profile above another, then the question isn’t just whether the model is technically robust. The deeper question is whether the affected person can understand what happened, question it, and recognise the human authority behind it.

That is why the accountability issue is connected to trust. Trust is not built because organisations simply say they use AI responsibly. It is built when people see clear ownership, can review it, and know that responsibility isn’t hidden by systems and automation.

This is not a stand-alone compliance issue

It would be a mistake to reduce this conversation to regulation or risk management. Of course, those matter. But the deeper point is organisational.

When accountability remains unclear, several things begin to happen at once. Managers rely on outputs they do not fully understand. Teams hesitate because decision rights feel blurred. HR inherits consequences from systems it did not help shape. Employees start to wonder whether they are interacting with support or surveillance. And leaders talk about innovation while people actually experience a loss of autonomy. None of that strengthens adaptability. It weakens it.

The strongest organisations will not be the ones that only introduce more AI tools. They will be the ones that redesign work and governance at the same time. I can easily see that work must be redesigned around value creation rather than layered with technology for technology’s sake. It’s also important to note that managers are a key barrier when organisations don’t change how decisions, flow, and responsibility are set up.

That applies directly to the following. If AI is inserted into old structures without redesigning ownership, organisations may move faster but become less integrated. They may produce more outputs while becoming less clear about how decisions are made and defended.

What responsible autonomy looks like

This is where many organisations would benefit from a more mature definition of responsible autonomy. Responsible autonomy is not an inspiring slogan. It is a design choice. It shows that your organisation has thought about where AI can help. It also recognises where human review is still needed. And, it keeps responsibility clear throughout the flow of work.

To me, the practical ingredients are already becoming clear.

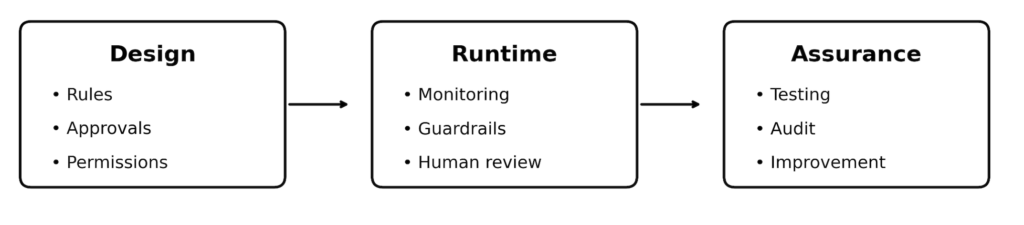

Research on agentic operations shows the importance of:

- Governance-as-code

- Human-in-the-loop design

- Continuous control and observation

- Approval frameworks

- Anomaly alerts

- Clear runtime guardrails

- Kill switches when needed

These may sound technical, but organisationally they all serve the same purpose: they prevent accountability from breaking down as autonomy increases.

In practical terms, organisations should be asking better questions before scaling AI-enabled autonomy:

- Which decisions are advisory, and which can trigger action?

- Where is human review essential because people are materially affected?

- Who owns the outcome if the system is wrong?

- Where can employees or managers challenge the logic or result?

- How do we monitor cumulative effects when multiple AI-enabled workflows interact?

I can understand if you think that these are questions of fear. But they are not. They are questions of maturity. Organisations that answer them early will be in a far better position than those that wait for trust to break before they respond.

Four actions that make accountability visible

As we see AI becoming more embedded in our day-to-day workflow, the real challenge is no longer only about capability. It is also about ownership. The more autonomy your organisation introduces, the more consciously it needs to design accountability. Otherwise, speed increases while clarity diminishes. That is where the responsible use of AI begins to matter most.

1. Clarify decision architecture before scaling autonomy

Be explicit about what AI is allowed to do, what remains advisory, and where human judgment must stay decisive. Indecision now can be costly later. It leads to confusion, inconsistent behaviour, and unnecessary risk.

2. Define named ownership for outcomes

Someone may own the system technically, but who owns the consequence operationally? Those are not always the same thing. Organisations must show clear accountability for their outcomes. This is especially important when decisions impact people, customers, or risk.

3. Build challenge and override options into the design

Employees, managers, or teams have to be able to question an AI-supported outcome. Human review, escalation routes, and override rights are not signs of weak automation. They are signs of responsible design.

4. Redesign workflow governance

The real issue rarely sits in one tool alone. It emerges when several AI-enabled decisions interact across your organisation. That is why your governance has to look across flows, not just within one isolated operation. Start working with core coordination and visibility, not fragmented oversight after the fact.

Making accountability visible is not about slowing your innovation down. It is about making sure autonomy remains trustworthy as it scales. Organisations that succeed will move faster and with more clarity. They will build stronger trust and face fewer surprises when it comes to decisions, risks, or consequences.

What this means for leaders and HR

This is not a technology conversation that leaders can delegate away, nor is it only an IT governance issue. It sits right at the intersection of organisational design, trust, workflow, and human experience.

For HR, this means becoming much more intentional about how AI enters people-related processes. For leaders, this means not confusing AI-generated confidence with real accountability. For both, it means focusing on what AI can enhance and how its use alters the sense of responsibility throughout the organisation.

That is where the deeper organisational choice sits. AI can either deepen clarity or deepen confusion. It can either help organisations move with more confidence or create a more polished version of the old state of uncertainty. The difference will not be determined by capability alone. It will be determined by whether accountability is designed with the same seriousness as autonomy.

The real test

The real test is not whether AI becomes more autonomous. That is already happening. The real test is whether organisations become more disciplined about ownership as a result.

If they do, AI can genuinely strengthen capability while protecting trust. Work can really move faster without becoming less human. Teams can rely on intelligent systems without losing the ability to question, interpret, and take responsibility. Autonomy can become a strength because accountability gives it shape.

If they do not, the opposite will happen. Speed will rise, but so will uncertainty. Decisions will feel more distant. Ownership will become harder to locate. And your organisation may discover too late that what looks like progress was actually the erosion of trust.

That is why this moment matters. Not because AI is taking over, but because it is forcing organisations to become much more honest about how accountability is designed. As AI moves closer to action, accountability has to move closer to the surface. Without that, autonomy is not a sign of maturity. It is simply a risk that appears to be effective but, in reality, is not.

I can imagine that this question is starting to surface in your organisation as well. That is often a useful signal in itself. It usually means the conversation has moved beyond tools and into the deeper terrain of how work, trust, and ownership are actually designed. That is where the most valuable shifts tend to begin. And if you would value a sparring partner in that conversation, you are welcome to reach out via twinxter.com.